K8s from 10,000 Feet

A crisp 10,000‑ft tour of Kubernetes with clear mental models, diagrams, and a quick hands‑on to demystify the control plane.

At first glance, Kubernetes can feel like a sprawling city of jargon and moving parts. That reaction is normal. The good news: once the mental model clicks, the system feels elegant and predictable.

This article gives you a wide‑angle view of Kubernetes—the control plane, how the core components work together, and a quick local demo so you can see it in action. Think of it as a map you can keep referring back to as you go deeper.

What you'll learn:

- How the control plane fits together at a high level

- What the API Server,

etcd, Scheduler, and Controllers actually do - How to spin up a local cluster and check its health quickly

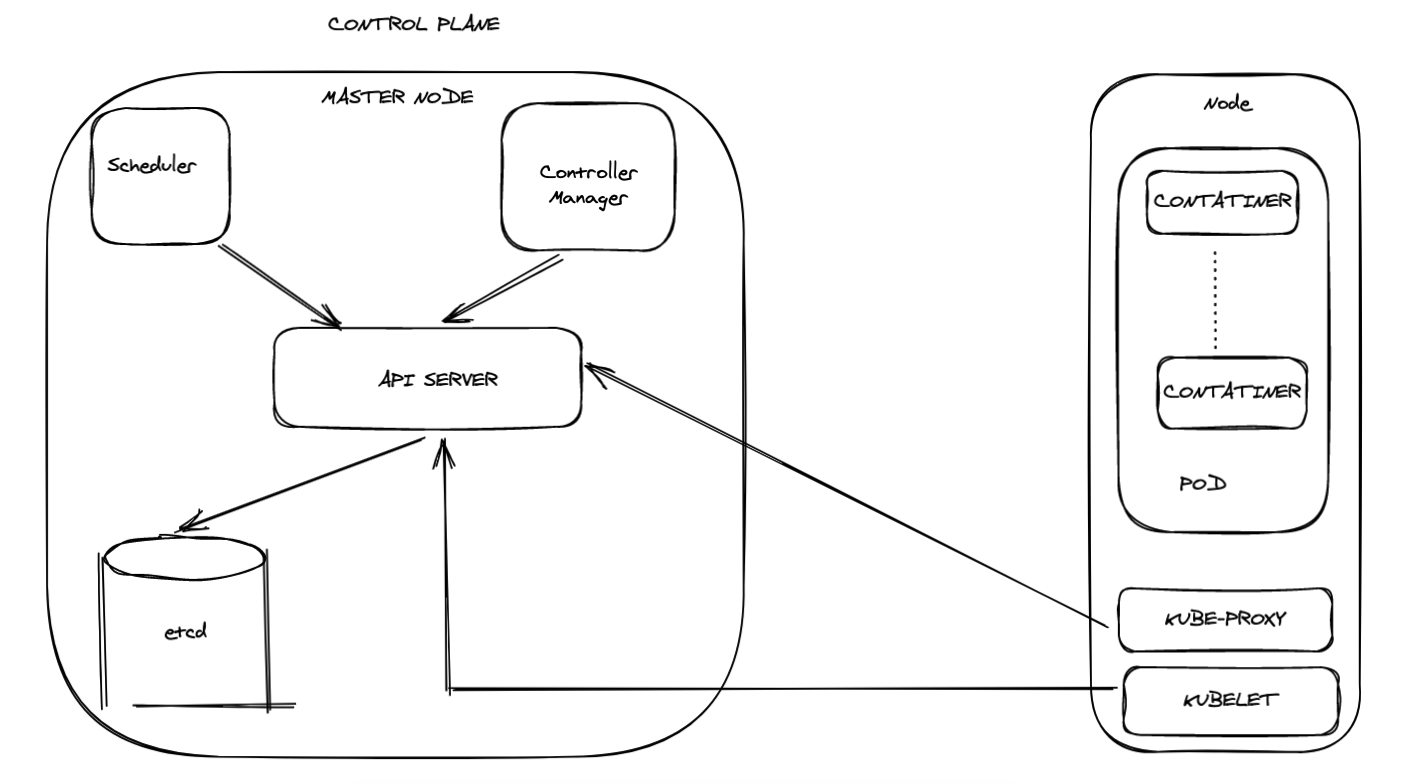

Architecture diagram of control plane and node

Terminologies

Control Plane: The brain of the cluster. It accepts your intent, stores cluster state, schedules work, and continuously reconciles reality back to the desired state.

Components that make up the control plane:

- API Server — The front door to the cluster

- etcd — The source of truth for cluster state

- Scheduler — Decides where pods run

- Controller Manager — Reconciles desired vs actual state

Control Plane = Brain of k8s

What the Control Plane does:

- Accepts desired state through the API Server

- Persists canonical state in

etcd - Schedules Pods to nodes via the Scheduler

- Continuously reconciles actual state via Controllers

API Server: The face of the control plane

The API Server is the single, authoritative front door to the cluster. Every component—your kubectl, the controllers, the scheduler—talks to it.

What it does:

- Exposes a versioned REST API for all Kubernetes resources

- Validates/mutates requests, enforces authn/authz and admissions

- Persists canonical state to

etcdand serves watch streams for change events

Key principle: Components never talk to etcd directly; they always go through the API Server.

Any kubectl command you run only ever contacts the API Server.

etcd: The only stateful component (Distributed key value store)

etcd is the cluster's source of truth—a highly available, consistent, distributed key‑value store. All Kubernetes objects (Pods, Deployments, Services, Secrets, …) ultimately live here.

Why it matters:

- Stores desired state durably so the cluster can recover from failures

- Uses quorum for writes; protect it, back it up, and keep it small and fast

Never write to it directly — you will bypass validation and invariants!

Scheduler: Decides where the pods run

The Scheduler's job is placement. When you create a Pod, it starts life as "Pending." The Scheduler scores the available nodes and binds the Pod to the best one.

What influences scheduling:

- Resource requests/limits (CPU, memory)

- Node taints/tolerations, affinities/anti‑affinities, topology spread

- Custom priorities and scheduling plugins from the ecosystem

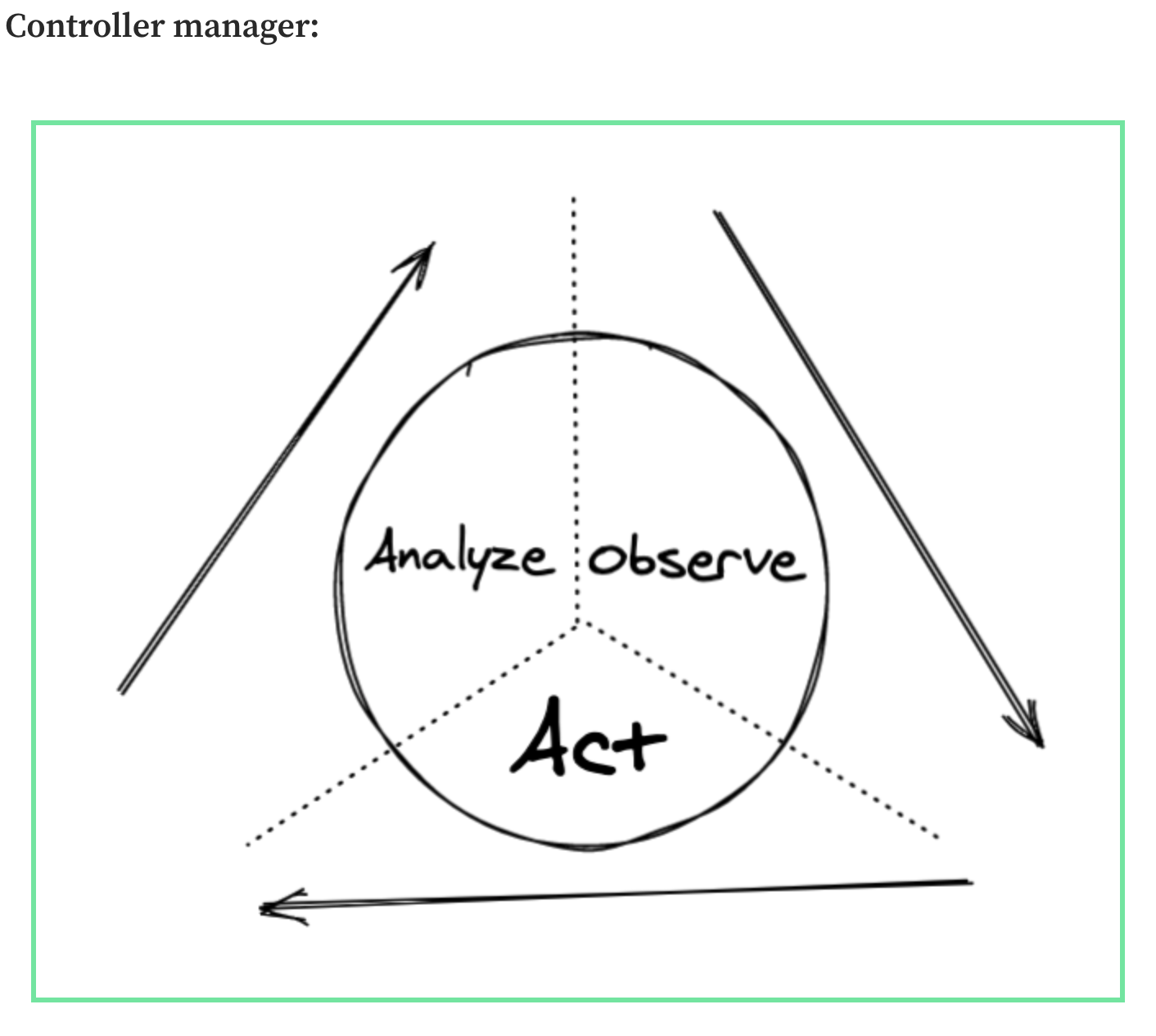

Controller Manager

Controllers run the reconciliation loop: observe → analyze → act, forever.

Each controller focuses on one type of resource. For example, the Deployment controller:

The Deployment Controller:

- Watches Deployment objects via the API Server

- Compares desired replica count to the actual running Pods

- Creates or removes Pods until reality matches intent

Controller managers are basically a combination of controllers which watch the api server for changes to resources (Deployments, Services etc) and perform necessary operations for each change.

Some of the controllers are:

- Replication Manager (a controller for ReplicationController resources)

- ReplicaSet, DaemonSet, and Job controller

- Deployment controller

- StatefulSet controller

- Node controller

- Service controller

- Endpoints controller

- Namespace controller

- PersistentVolume controller

Hands-On: Running a Local Cluster

Enough theory—let’s spin up a tiny cluster locally so the ideas become tangible. We’ll use Minikube, but KinD (Kubernetes in Docker) is a great alternative.

Install and start Minikube on macOS:

brew install minikube

minikube start

Minikube by default spins up a single node cluster in your local.

Once the node is up, any kubectl command would directly interact with api-server as we saw earlier. So lets try running the below command and check the response.

kubectl get componentstatuses

The API server exposes an API resource called ComponentStatus, which shows the health status of each Control Plane component.

The response should hopefully be as below indicating the status of each control plane component:

What's Next?

In the next series of articles we will explore kubelet, kubeproxy, deep dive into some of the control plane components and explore running k8s in cloud (Digital Ocean).

Thanks for reading!